Redeveloping TCP from the ground up

Published: 2023-11-28 (last updated: 2024-10-11)

The Transmission Control Protocol (TCP) is one of the main Internet protocols. Usually spoken on top of the Internet Protocol (legacy version 4 or version 6), it provides a reliable, ordered, and error-checked stream of octets. When an application uses TCP, they get these properties for free (in contrast to UDP).

As common for Internet protocols, TCP is specified in a series of so-called requests for comments (RFC). The latest revised version from August 2022 is RFC 9293; the initial one was RFC 793 from September 1981.

My brief personal TCP story

My interest in TCP started back in 2006 when we worked on a network stack in Dylan (these days abandoned). Ever since, I wanted to understand the implementation tradeoffs in more detail, including attacks and how to prevent a TCP stack from being vulnerable.

In 2012, I attended ICFP in Copenhagen while a PhD student at ITU Copenhagen. There, Peter Sewell gave an invited talk "Tales from the jungle" about rigorous methods for real-world infrastructure (C semantics, hardware (concurrency) behaviour of CPUs, TCP/IP, and likely more). Working on formal specifications myself in (my dissertation), and having a strong interest in real systems, I was immediately hooked by his perspective.

To dive a bit more into network semantics, the work done on TCP by Peter Sewell, et al., is a formal specification (or a model) of TCP/IP and the Unix sockets API developed in HOL4. It is a label transition system with nondeterministic choices, and the model itself is executable. It has been validated with the real world by collecting thousands of traces on Linux, Windows, and FreeBSD, which have been checked by the model for validity. This copes with the different implementations of the English prose of the RFCs. The network semantics research found several issues in existing TCP stacks and reported them upstream to have them fixed (though, there still is some special treatment, e.g., for the "BSD listen bug").

In 2014, I joined Peter's research group in Cambridge to continue their work on the model: updating to more recent versions of HOL4 and PolyML, revising the test system to use DTrace, updating to a more recent FreeBSD network stack (from FreeBSD 4.6 to FreeBSD 10), and finally getting the journal paper (author's copy) published. At the same time, the MirageOS melting pot was happening at University of Cambridge, where I contributed with David OCaml-TLS and other things.

My intention was to understand TCP better and use the specification as a basis for a TCP stack for MirageOS. The existing one (which is still used) has technical debt: a high issue to number of lines ratio. The Lwt monad is ubiquitous, which makes testing and debugging pretty hard, and also utilising multiple cores with OCaml Multicore won't be easy. Plus it has various resource leaks, and there is no active maintainer. But honestly, it works fine on a local network, and with well-behaved traffic. It doesn't work that well on the wild Internet with a variety of broken implementations. Apart from resource leakage, which made me implement things such as restart-on-failure in Albatross, there are certain connection states which will never be exited.

The rise of µTCP

Back in Cambridge, I didn't manage to write a TCP stack based on the model, but in 2019, I restarted that work and got µTCP (the formal model manually translated to OCaml) to compile and do TCP session setup and teardown. Since it was a model that uses nondeterminism, this couldn't be translated one-to-one into an executable program, but there are places where decisions have to be made. Due to other projects, I worked only briefly in 2021 and 2022 on µTCP, but finally in the Summer of 2023, I motivated myself to push µTCP into a usable state. So far I've spend 25 days in 2023 on µTCP. Thanks to Tarides for supporting my work.

Since late August, we have been running some unikernels using µTCP, e.g., the retreat website. This allows us to observe µTCP and find and solve issues that occur in the real world. It turned out that the model is not always correct (i.e., there is no retransmit timer in the close wait state, which avoids proper session teardowns). We report statistics about how many TCP connections are in which state to an Influx time series database and view graphs rendered by Grafana. If there are connections that are stuck for multiple hours, this indicates a resource leak that should be addressed. Grafana was tremendously helpful to find out where to look for resource leaks. Still, there's work to understand the behaviour, look at what the model does, what µTCP does, what the RFC says, and eventually what existing deployed TCP stacks do.

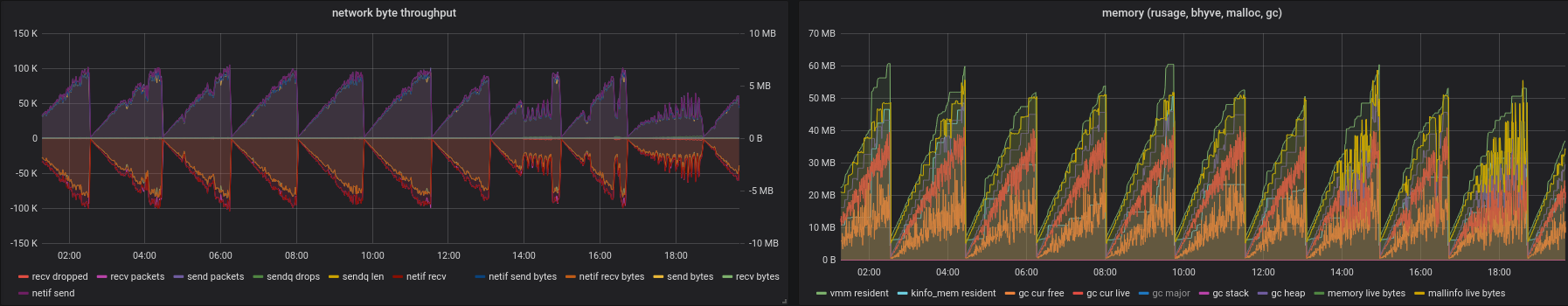

The secondary nameserver issue

One of our secondary nameservers attempts to receive zones (via AXFR using TCP) from another nameserver that is currently not running. Thus it replies to each SYN packet a corresponding RST. Below I graphed the network utilisation (send data/packets is positive y-axis, receive part on the negative) over time (on the x-axis) on the left and memory usage (bytes on y-axis) over time (x-axis) on the right of our nameserver. You can observe that both increases over time, and roughly every 3 hours, the unikernel hits its configured memory limit (64 MB), crashes with out of memory, and is restarted. The graph below is using the mirage-tcpip stack.

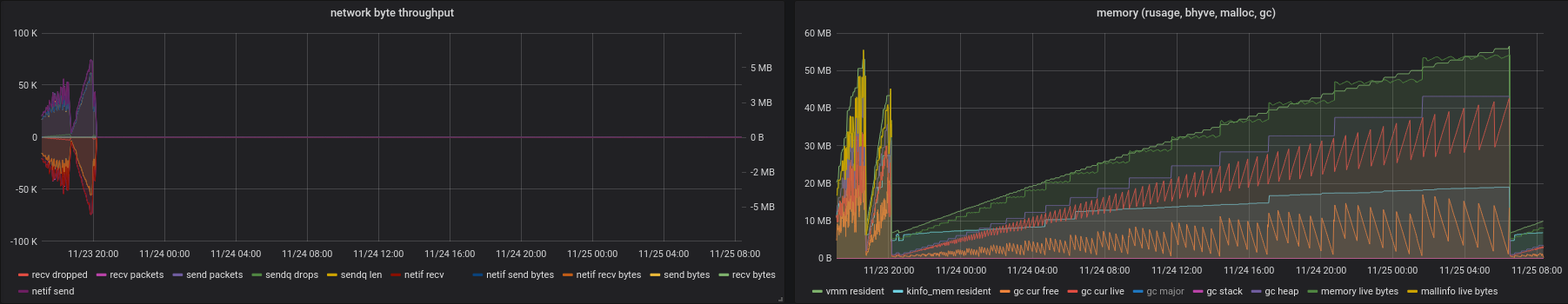

Now, after switching over to µTCP, graphed below, there's much less network utilisation and the memory limit is only reached after 36 hours, which is a great result. Though, still it is not very satisfying that the unikernel leaks memory. On their left side, both graphs contain a few hours of mirage-tcpip, and shortly after 20:00 on Nov 23rd, µTCP got deployed.

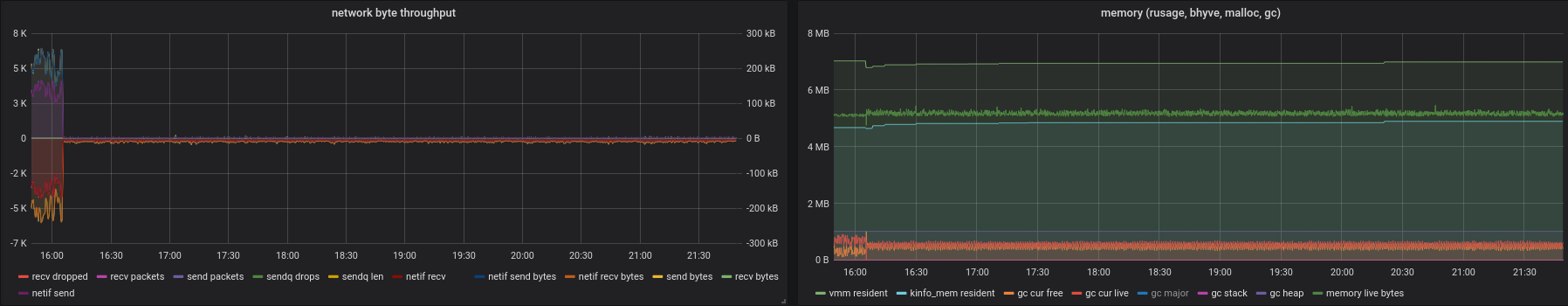

Investigating the involved parts showed that an unestablished TCP connection has been registered at the MirageOS layer, but the pure core does not expose an event from the received RST that the connection has been cancelled. This means the MirageOS layer piles up all the connection attempts, and it doesn't inform the application that the connection couldn't be established. Note that the MirageOS layer is not code derived from the formal model, but boilerplate for (a) effectful side-effects (IO) and (b) meeting the needs of the TCP.S module type (so that µTCP can be used as a drop-in replacement for mirage-tcpip). Once this was well understood, developing the required code changes was straightforward. The graph shows that the fix was deployed at 15:25. The memory usage is constant afterwards, but the network utilisation increased enormously.

Now, the network utilisation is unwanted. This was hidden by the application waiting forever while the TCP connection getting established. Our bug fix uncovered another issue -- a tight loop:

- The nameserver attempts to connect to the other nameserver (

request); - This results in a

TCP.create_connectionwhich errors after one roundtrip; - This leads to a

close, which attempts arequestagain.

This is unnecessary since the DNS server code has a timer to attempt to connect to the remote nameserver periodically (but takes a break between attempts). After understanding this behaviour, we worked on the fix and redeployed the nameserver again. On the left edge, the has the tight loop (so you have a baseline for comparison), and at 16:05, we deployed the fix. Since then it looks pretty smooth, both in memory usage and in network utilisation.

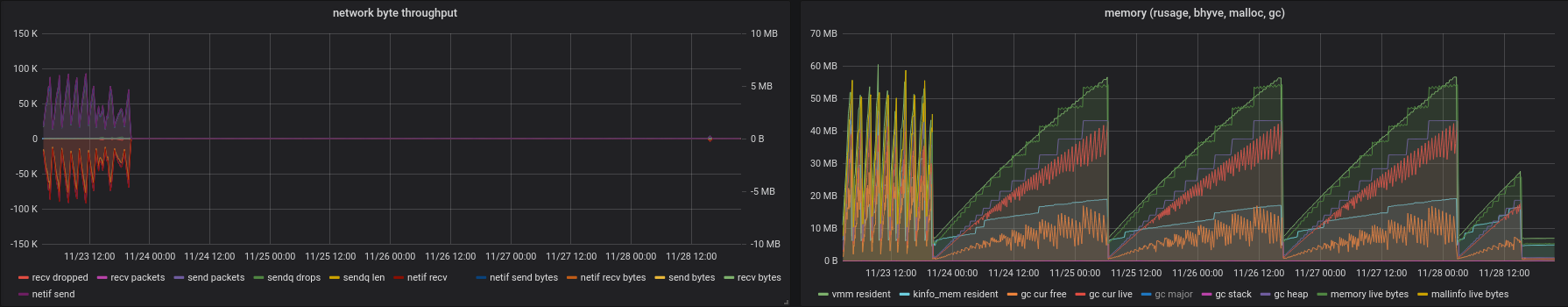

To give you the entire picture, below is the graph where you can spot the mirage-tcpip stack (lots of network, restarting every 3 hours), µTCP-without-informing-application (run for 3 * ~36 hours), DNS-server-high-network-utilization (which only lasted for a brief period, thus it is more a point in the graph), and finally the unikernel with both fixes applied.

Conclusion

What can we learn from that? Choosing convenient tooling is crucial for effective debugging. Also, fixing one issue may uncover other issues. And of course, the mirage-tcpip was running with the DNS-server that had a tight reconnect loop. But, below the line: should such an application lead to memory leaks? I don't think so. My approach is that all core network libraries should work in a non-resource-leaky way with any kind of application on top of it. When one TCP connection returns an error (and thus is destroyed), the TCP stack should have no more resources used for that connection.

We'll take more time to investigate issues of µTCP in production, plan to write further documentation and blog posts, and hopefully soon will be ready for an initial public release. In the meantime, you can follow our development repository.

We at Robur are working as a collective since 2018 on public funding, commercial contracts, and donations. Our mission is to get sustainable, robust, and secure MirageOS unikernels developed and deployed. Running your own digital communication infrastructure should be easy, including trustworthy binaries and smooth upgrades. You can help us continue our work by donating (select Robur from the drop-down or put "donation Robur" in the purpose of the bank transfer).

If you have any questions, reach us best via eMail to team AT robur DOT coop.